Why Manufacturers Are Modernizing Carefully and Still Pulling Ahead

Welcome to DX Brief - Manufacturing, where every week, we interview practitioners and distill industry podcasts and conferences into what you need to know.

In today's issue:

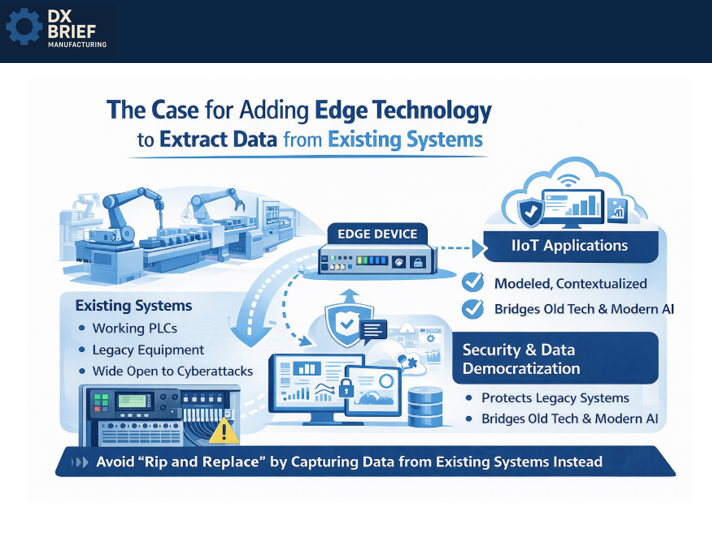

The case for adding edge technology that extracts data from existing systems.

2026 state of automation report: Manufacturers know automation is mandatory, yet they're pulling back on cutting-edge investments.

Schneider's "boring transformation" that scaled to 150 smart factories in seven years.

1. The case for adding edge technology that extracts data from existing systems

Manufacturing Hub podcast: How Modern Plants Actually Bridge Legacy Automation and AI w/ Benson Hougland (Jan 28, 2026)

Background: Everyone wants industrial AI. But the AI you use every day (ChatGPT, Claude, etc) hallucinates because its training data contains conflicting sources. Benson Hougland, a 30-year controls veteran at Opto 22, says you cannot tolerate that in an industrial setting. The solution isn't better AI models. It's trustworthy, contextualized data from the edge so you don’t have to replace working equipment.

TLDR:

Industrial AI requires data integrity that consumer AI doesn't. Hallucinations are unacceptable when controlling physical processes. The fix starts at the edge with modeled, contextualized data.

Layer edge technology on top of brownfield systems rather than replacing working PLCs. A tier-one manufacturer connected injection molding machines across four plants without touching existing controls.

Cybersecurity isn't a product; it's a process. Start with eliminating default passwords and back doors, then focus on network segmentation to protect legacy systems that were never designed with security in mind.

Start with the three pillars: controls, security, data. Hougland breaks the modernization challenge into three buckets that must work together.

Controls: that thing has to be 100% uptime, solid, reliable.

Security: account-based authentication, network segmentation, audit trails.

Data democratization: how do you take data from machine control programs and make it available to upstream applications?

Most manufacturers treat these as separate initiatives. They upgrade PLCs, then think about cybersecurity, then worry about data. But modern edge devices can address all three simultaneously.

Layer on top, don't rip and replace. The ROI case for replacing a working PLC is nearly impossible to make. But the case for adding edge technology that extracts data from existing systems? Much easier.

A tier-one automotive supplier did exactly this across 4 plants with various injection molding machines. They layered edge devices to capture runtime data, errors, and faults – feeding that upstream without touching the machine controls.

This approach also solves the obsolescence problem. That old SLC 500? It's wide open to cyberattacks if anyone gains network access. Edge technology can protect downstream legacy systems while simultaneously democratizing their data. You get security and data visibility from a single intervention.

Cybersecurity is a process, not a product. The goalposts move every minute. Hougland identifies four non-negotiables: eliminate default passwords (yes, some manufacturers still use admin/password), close back doors and open ports, implement account-based authentication with audit trails, and segment networks to protect systems that were never designed for security.

The IT/OT friction is real. When you ask IT for a static IP, you've shifted responsibility for securing that device to them. That's why they're the "department of no."

The solution: OT professionals must develop basic networking and cybersecurity literacy. The lines are blurring, and waiting for IT to handle everything creates dangerous gaps.

Data democratization means context, not just transport. Everyone talks about OPC-UA, MQTT, REST APIs. But Hougland argues the transport mechanism isn't enough. It's what's being transported. Data without context is just noise.

The goal is modeled, contextualized data at the edge so upstream applications can use it immediately – whether that's a historian, a SCADA system, or eventually an AI agent.

This also eliminates vendor lock-in. If your systems speak in open, communicative ways with context, you're not tied to any single vendor's proprietary mechanism. You can change upstream applications without rearchitecting your edge infrastructure.

What to do about this:

→ Identify one brownfield data extraction win. Find a machine or line where you want visibility but can't justify PLC replacement. Pilot edge technology that layers on top, captures the data you need, and proves value before expanding. Start with equipment that's causing the most operational blind spots.

→ Eliminate default credentials this month. This is the lowest-hanging fruit in industrial cybersecurity. Audit every networked device for default passwords. If your organization hasn't done this systematically, you have an attack vector that's trivially exploitable.

→ Invest 4 hours in basic networking education for your OT team. Resources from practitioners like Josh Barris and Vlad Romanov (Manufacturing Hub) exist specifically for control engineers. Topics like IP addressing, managed switches, and VLANs are no longer optional knowledge.

2. 2026 state of automation report: Manufacturers know automation is mandatory, yet they're pulling back on cutting-edge investments

Ctrl+Alt+Mfg Podcast with Mark Hoske, Editor-in-Chief at Control Engineering: Inside the 2026 State of Automation Report (Jan 20, 2026)

Background: Control Engineering's 2026 State of Automation report surveyed 147 engineers and system integrators – the people who actually buy and specify automation – to find out where manufacturers are getting real returns. The answer? Advanced process controls and control system optimization lead at 41% for highest ROI, followed by a three-way tie between operations visibility (26%), motion control and robotics (25%), and quality control systems (24%). But here's the twist: there's been a 9 percentage point shift from moderate to conservative adoption of leading-edge technologies year-over-year.

TLDR:

Advanced process controls deliver the highest perceived ROI at 41%, with operations visibility, motion control, and quality systems tied for second. Prioritize control system optimization before chasing newer technologies.

70% of respondents cite cost reduction and operational efficiency as the primary driver for automation investment, but 35% highlight the creation of high-skill roles as a key workforce outcome. Automation is also creating jobs, not just eliminating them.

There's a 9-point shift toward conservative adoption of leading-edge tech, yet respondents acknowledge those same technologies could address the risks fueling the hesitation. A classic innovation paradox worth resolving.

The ROI hierarchy is more stable than you think. While everyone chases the next shiny technology – AI agents, digital twins, 5G connectivity – the data tells a different story. The technologies producing the highest ROI remain remarkably consistent:

Advanced process controls and control system optimization, including no-code and low-code programming, topped the list at 41%.

Operations visibility through dashboards and multi-level HMI/SCADA systems came in at 26%.

Motion control and robotics hit 25%.

Advanced quality control systems reached 24%.

Meanwhile, IoT and edge computing platforms (technologies that dominated conference keynotes for years) came in at just 8% for perceived ROI. This doesn't mean IoT is worthless. It means the fundamentals still matter most. Before investing in the frontier, ask whether you've optimized what you already have.

The conservative shift reveals a paradox worth exploiting. Year-over-year data shows a 9 percentage point shift from moderate to conservative adoption of leading-edge automation technologies. Only 12% of respondents identified as early adopters – the same percentage as last year.

Risk aversion is rising. But here's the irony respondents themselves noted: the very technologies they're hesitant to adopt could address the risks fueling that hesitation. Advanced automation can lower operational risk, reduce dependency on scarce labor, and improve safety outcomes.

The implication? Manufacturers who push through the conservative instinct and invest strategically in proven automation technologies, and not bleeding-edge experiments, will gain competitive advantage while others wait.

Automation is creating higher-skill roles, not just eliminating jobs. The workforce narrative is shifting. 35% of respondents highlight the creation of high-skill roles as an important workforce impact of automation. Another 33% are using automation to continue production by filling skills gaps. Essentially automating because they can't hire, not because they want to cut headcount.

The related recommendation from the report: automation reduces low-skill labor demand, but industries must address the growing skills gap through upskilling and cross-functional training.

What to do about this:

→ Audit your ROI assumptions against the data. Map your current and planned automation investments against the ROI hierarchy: process controls, operations visibility, motion control, quality systems. If you're over-indexed on IoT and edge without solid fundamentals, rebalance.

→ Quantify the paradox in your own organization. Identify where conservative adoption of automation is actually increasing operational risk, whether through labor dependency, quality variability, or safety exposure. Present the business case for strategic investment as risk mitigation, not innovation theater.

→ Build an upskilling roadmap tied to automation deployment. For every automation project, define the high-skill roles it creates and the training required. Budget for workforce development as a line item in your automation business case; not an afterthought.

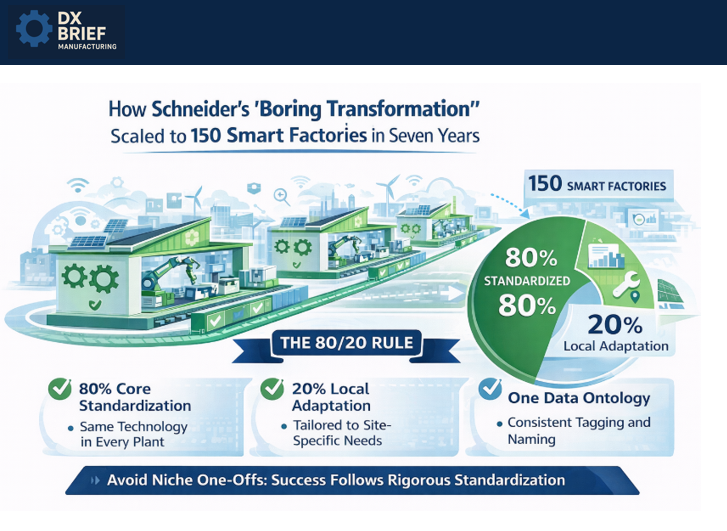

3. Schneider's "boring transformation" that scaled to 150 smart factories in seven years

The Automation Podcast with Dante Vaccaro, Digital Transformation Specialist at Schneider Electric (Jan 21, 2026)

Background: Dante Vaccaro helps manufacturers build digital roadmaps at Schneider Electric, but the most compelling story he tells is Schneider's own transformation. They started with 11 smart factories in 2018. Today they have 150. The secret? Their VP calls it "a boring transformation." Every plant looks exactly the same: same technology, same use cases, same operating model. Here's the 80/20 framework that made scale possible.

TLDR:

Monitoring OEE without an action plan is like buying a scale to lose weight. You have to focus on the drivers (training, reliability, process issues), not just the metric.

Successful transformations follow the 80/20 rule: 80% standardized core across all facilities, 20% customized to local needs. Schneider scaled to 150 factories because they resisted the temptation to let every plant do its own thing.

Data ontology – your tagging and naming structures – is the hidden tax that kills scalability; the "sins of our fathers" in hastily-built control systems create technical debt that blocks every future initiative.

Digital transformation is a strategy, not a software purchase. The goal is digital continuity across operations and IT to gain actionable insights. But many manufacturers skip straight to technology without aligning to strategic business outcomes.

Vaccaro shares a revealing example: "A lot of people come to me and say, 'Our digital transformation is we need to monitor OEE in real time.' I ask, 'What's your plan for that?' They say, 'If we monitor OEE, we'll increase 5% efficiency.' That's like buying a scale and trying to lose weight. If I step on that scale every five minutes, am I going to lose weight?"

The drivers of OEE improvement are training, reliability, and process discipline. The dashboard just shows you the score.

The 80/20 rule is why Schneider scaled, and why most transformations don't. Schneider's smart factory program succeeded because they enforced ruthless standardization: 80% of technology, use cases, and operating practices are identical across all 150 facilities. Only 20% is customized for location-specific needs.

"A lot of people want to do the niche one-offs," Vaccaro observes. But that's exactly what prevents scale. When every plant has its own approach to calculating OEE, its own manufacturing execution system, and its own data structures, you can't roll up insights, share best practices, or deploy improvements globally.

Data ontology is the hidden killer of transformation projects. "The sins of our fathers on the control side" – that's how Vaccaro describes the accumulated technical debt from decades of "hurry up and get the system running" implementations. PLC programs are flat. Tag names are inconsistent. Nobody knows where data is coming from.

This matters because AI and analytics depend on knowing the context of your data. "If every tag is named 'pump' but I don't know what machine it's coming from, it's very hard to create a repeatable template." The fix requires extra work upfront – disciplined naming structures, clear hierarchies – but Vaccaro promises "it will pay dividends down the line."

What to do about this:

→ Audit your transformation initiatives against the 80/20 principle. What percentage of your digital projects are standardized vs. one-off customizations? If you're below 70% standardized, you're building a transformation that won't scale.

→ Require a "driver action plan" for every metric you choose to monitor. Before deploying any new dashboard, document: What actions will we take when this metric moves? Who is responsible? What's the escalation path?

→ Publish and enforce a data ontology standard for all new control system work. Require OEMs, integrators, and internal teams to follow your naming conventions. Yes, it slows down initial projects. It accelerates everything that comes after.